Data security best practices in 2026 are no longer a compliance checkbox. They are the operational foundation that determines whether your organization weathers a breach in hours – or loses weeks, reputation, and revenue recovering from one.

Threat actors have accelerated. Regulatory bars have risen. And the attack surface has expanded dramatically as AI tools, third-party APIs, and omnichannel customer platforms multiply the number of systems that touch sensitive data. This guide delivers the implementation depth, governance models, and measurable metrics security leaders need to build genuine resilience – not just audit-ready documentation.

The 2026 Threat Landscape: Why Best Practices Must Evolve

Four Attack Vectors Defining This Year

Modern adversaries no longer rely on a single tactic. They blend credential theft, API exploitation, and identity manipulation into coordinated campaigns that are designed to bypass point solutions. The four dominant vectors demanding attention in 2026 are:

- Credential stuffing – automated attacks that exploit passwords reused across leaked databases

- Deepfake-powered social engineering – AI-generated audio and video targeting finance, legal, and executive signatories to authorize fraudulent transactions

- Shadow AI tooling – unsanctioned machine learning applications that silently exfiltrate proprietary data during model training or inference

- Supply chain implants – malicious code seeded through open-source package dependencies before distribution to thousands of downstream environments

The shadow AI vector deserves particular attention as organizations accelerate AI adoption. When teams deploy third-party AI tools – including chatbots, AI assistants, and automation platforms – without proper vetting, sensitive customer conversations and internal data can flow into systems outside your governance perimeter. Selecting platforms with transparent data handling practices and open-source auditability significantly reduces this risk. For teams evaluating AI chatbot deployments specifically, AI Chatbot Strategy 2026: Trends, Platform Comparison and Mistakes to Avoid outlines the key security and vendor assessment criteria to apply before going live.

Regulatory Catalysts Raising the Floor

Regulators globally are referencing formal frameworks as minimum baselines. The NIST Cybersecurity Framework and the EU NIS2 Directive now set the standard against which boards measure security program maturity – not just annual audits.

Organizations operating cloud-first or shared-responsibility infrastructure must document exactly where platform duties end and custom security obligations begin. Failing to articulate that boundary is one of the most common causes of audit failures, even among organizations with otherwise strong controls.

2026 Priority Heatmap: Impact vs. Effort

| Priority Practice | Maturity Indicator | Business Win |

|---|---|---|

| Adaptive identity and MFA | 100% of privileged accounts use phishing-resistant factors | Stops account-takeover fraud at the source |

| End-to-end encryption | All sensitive data owners enforce rotating keys and tamper logging | Preserves brand trust and regulatory compliance |

| DevSecOps automation | 90% of builds enforce SBOM and automated scanning | Cuts patch windows by up to 60% |

| Data classification refresh | Every data domain has an owner, label, and retention policy | Reduces incident response time dramatically |

| Continuous penetration testing | Quarterly purple team exercises with exec-level reporting | Informs investment decisions with evidence, not estimates |

Pro Tip: Pair each heatmap item with a cost-of-inaction statement. Budget conversations move faster when stakeholders understand the financial exposure of leaving a control gap open – not just the theoretical risk score.

Governance-Driven Data Security Best Practices

Zero Trust Policy Engineering

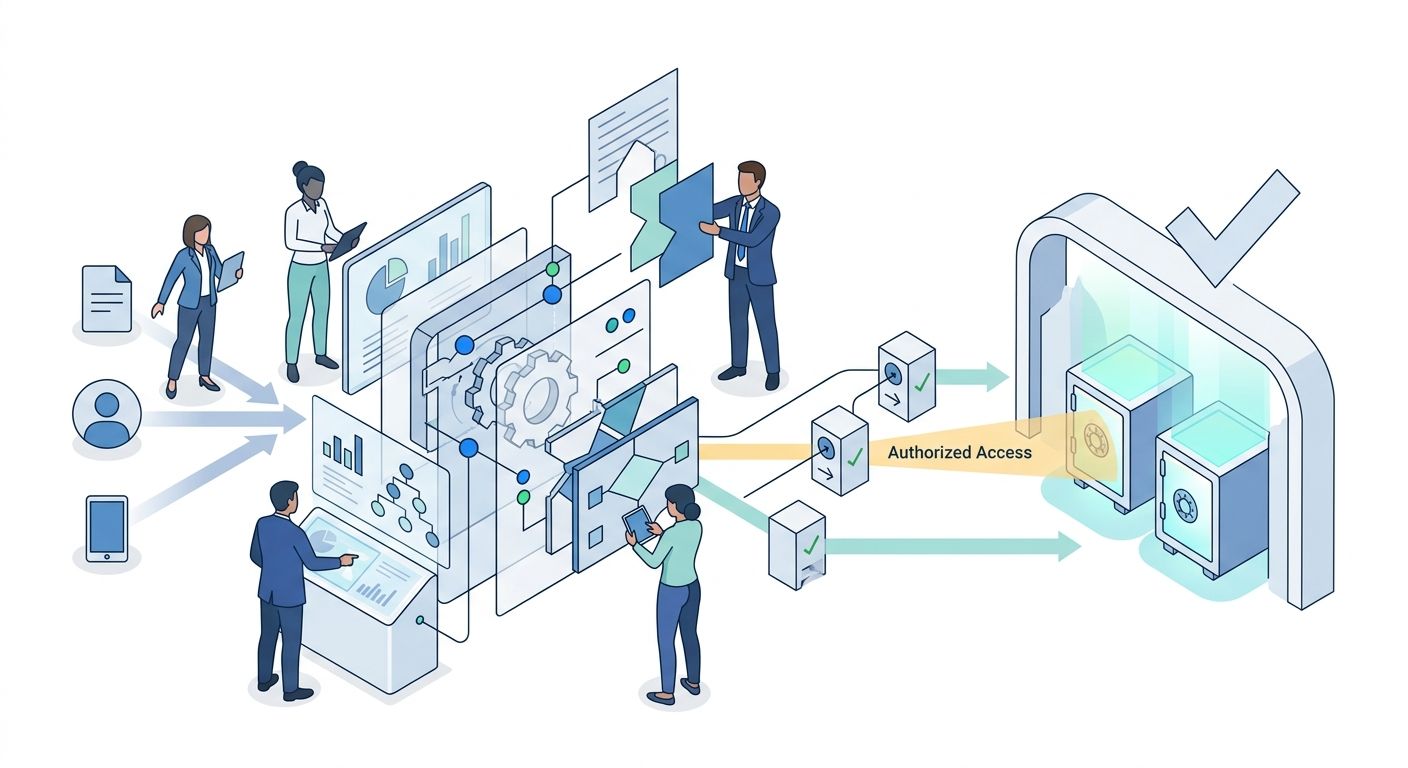

Zero trust is an architecture philosophy, not a product. Every implementation begins with context-aware authorization: map your identities, devices, and workloads first, then enforce least privilege at every layer.

A practical zero trust sequence:

- Define protect surfaces around critical datasets and applications – not network perimeters

- Require continuous verification using device health signals, behavioral analytics, and session risk scores

- Automate access revocation when anomalies are detected or contractor offboarding events occur

This governance-first approach keeps security controls aligned with actual business workflows rather than isolated within IT silos.

Granular Data Classification and Access Control

Classification is the structural foundation beneath every downstream security control. Without it, entitlements become ad hoc, retention policies are unenforceable, and incident response lacks the triage clarity needed to contain damage quickly.

Start by cataloging all systems, assigning data owners, and mapping legal drivers – GDPR, HIPAA, PCI DSS, or sector-specific regulations.

A practical four-tier classification model:

- Public – marketing collateral and blog content with no confidentiality exposure

- Internal – financial forecasts, sprint backlogs, and internal communications

- Confidential – client PII, source code, and proprietary algorithms

- Restricted – merger documents, cryptographic keys, and regulated health or financial data

Pair each tier with enforcement mechanisms: attribute-based access controls, dynamic data masking, and automated de-provisioning when role changes occur. This creates a resilient access lattice where the blast radius of any single compromise is limited by design.

Lifecycle Vendor Oversight and Shared Responsibility

Every third-party tool that touches your data extends your attack surface. Maintain a vendor register that documents contract scope, data categories handled, and current security attestations such as SOC 2 Type II or ISO 27001.

Warning: A compliance certificate proves an organization passed an audit at a point in time – not that it operates securely today. Supplement certifications with evidence reviews, security questionnaires, and defined escalation paths tied to business-level impact thresholds.

For critical vendors, co-create incident response playbooks that define containment steps, notification timelines, and forensic responsibilities for both parties. This shared operational clarity converts vendor oversight from a procurement exercise into a co-managed security posture.

This principle applies directly to AI and chatbot platforms that handle customer conversations. Organizations deploying omnichannel messaging tools should verify that their vendor can demonstrate data isolation between clients, clear retention policies, and transparent access logging. For guidance on what responsible AI customer care deployment looks like from both a capability and data governance perspective, see Customer Care Chatbot Development: The Complete 2026 Guide for Businesses.

Reference the CISA Secure Software Development Attestation to guide contractual language and acceptance criteria when onboarding new vendors.

Technical Controls: The Security Architecture Layer

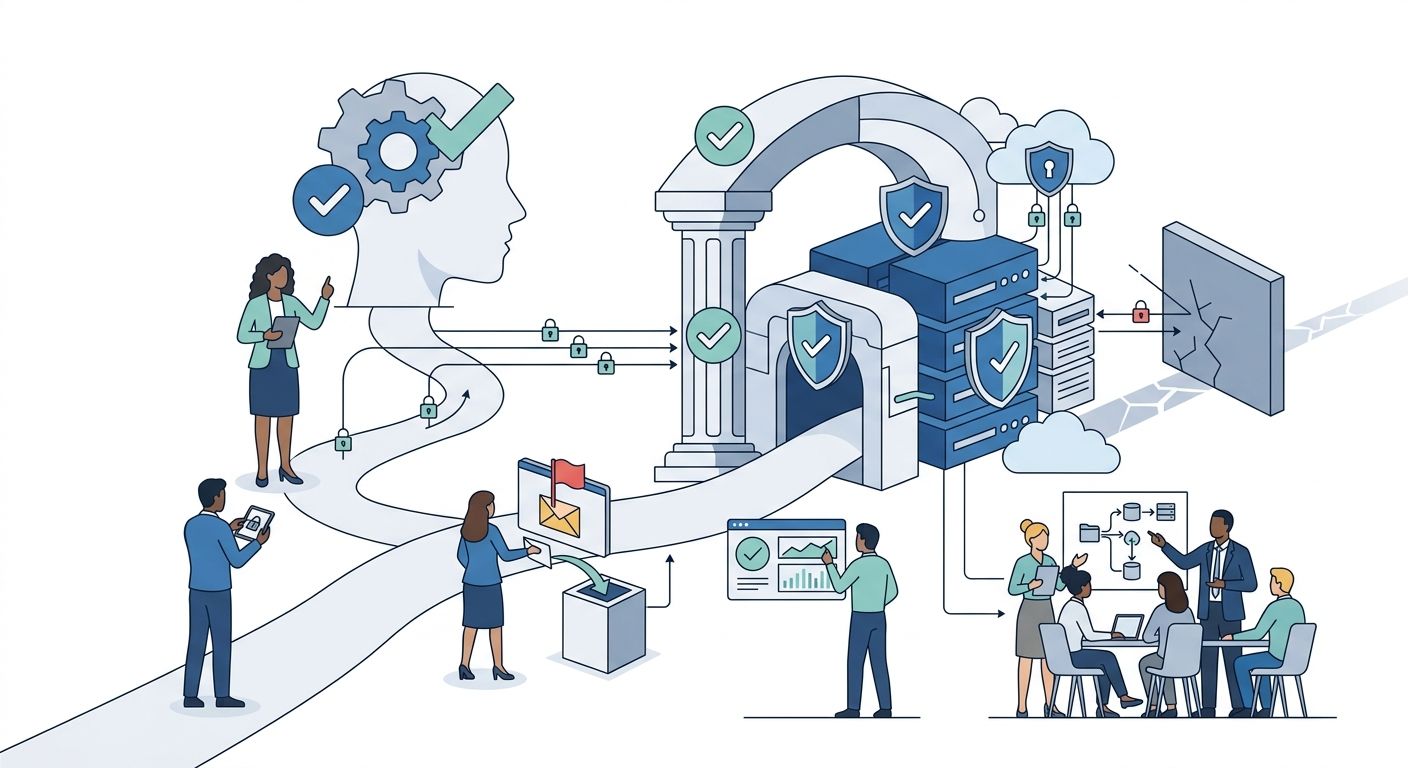

Identity, MFA, and Adaptive Access Management

Passwords cannot withstand modern credential harvesting at any scale. Enforce phishing-resistant MFA across the organization: FIDO2 security keys, certificate-based smart cards, or push confirmations tied to verified device posture.

Complement authentication with conditional access policies that evaluate geolocation, impossible travel signals, and unusual API usage patterns – automatically locking sessions when risk exceeds defined thresholds. Feed identity telemetry into your SIEM and XDR platforms for unified detection and correlation.

Pro Tip: Roll out MFA in tiers to manage change fatigue. Start with privileged identities, expand to high-risk SaaS applications, then extend to the general workforce. Each tier should have its own enablement plan and rollback procedure.

Encryption, Tokenization, and Secure Key Custody

Encrypt data in transit using TLS 1.3 with perfect forward secrecy. Encrypt data at rest using AES-256 or ChaCha20-Poly1305. Tokenize PII inside analytics and reporting workflows so teams can derive insights without ever touching raw values.

Hardware Security Module (HSM) deployment – pros and cons:

- Pros: Tamper-resistant key storage that satisfies stringent regulatory requirements; cryptographic offloading improves performance consistency at scale

- Cons: Higher upfront cost and integration complexity in hybrid cloud environments; requires specialized expertise to manage firmware and redundancy configurations

Tie all key lifecycle events – rotation, revocation, and escrow – to monitoring alerts so every cryptographic action is provable during audits. Always use vetted open standards such as the Signal Protocol rather than homegrown cryptographic implementations.

Secure DevSecOps Pipelines

Development velocity must not erode security stewardship. Embed controls directly into CI/CD pipelines so security is validated on every build – not reviewed once per quarter.

A production-grade DevSecOps pipeline should:

- Mandate signed commits and branch protections to preserve code provenance

- Run automated SAST/DAST scanning and dependency checks on every build artifact

- Enforce infrastructure-as-code guardrails using policy-as-code engines

- Generate and track software bills of materials (SBOMs) for all production services

Benchmark pipeline maturity against the OWASP SAMM framework to identify gaps and prioritize remediation investments.

Note: When integrating AI code assistants into development workflows, restrict training data sources, log all prompts, and audit outputs for proprietary code fragments. The same governance logic that applies to customer-facing AI tools applies equally to developer-facing ones.

Operationalizing Security Across the Organization

Building a Security-First Culture

Human behavior remains the decisive variable in nearly every breach pathway. A compliance-only training program – annual video, quiz, checkbox – does not change behavior under pressure. A blended learning program does.

Effective security culture programs in 2026 combine microlearning modules, realistic phishing simulations, gamified competitions that reward secure behaviors, and role-specific content that connects abstract controls to actual daily workflows.

Adoption sequence that drives measurable change:

- Launch with executive-backed messaging where leaders publicly complete the same training as all staff

- Track participation and simulation performance by department, adjusting content for role relevance quarterly

- Deploy security champions in each business unit who answer workflow-specific questions and escalate patterns to the central team

Incident Response and Crisis Readiness

Preparation quality is the single biggest predictor of breach containment time. Maintain a living runbook covering detection, containment, eradication, recovery, and post-incident analysis – with named owners, escalation contacts, and regulatory notification obligations documented for each phase.

During active incidents, a structured decision log that links containment actions to data classification levels ensures that security best practices continue to drive priorities even under operational stress.

Pro Tip: Conduct quarterly tabletop exercises that inject scenarios your team has not rehearsed: third-party failures, insider threats, and AI tool misuse are three high-value scenarios for 2026.

Tooling Selection and Platform Consolidation

Point solutions breed alert fatigue and visibility gaps. A consolidated platform strategy that integrates identity, telemetry, orchestration, and workflow automation produces better detection outcomes at lower analyst overhead.

When evaluating security platforms, apply a consistent scorecard:

- Integration depth with identity providers, cloud infrastructure, and ticketing systems

- Native automation playbooks for credential revocation, database isolation, and forensic data capture

- Transparent, usage-based pricing rather than opaque tier structures that obscure true cost of ownership

This consolidation principle extends beyond pure security tooling. Customer communication platforms that handle sensitive data – including AI chatbots and omnichannel messaging tools – should be held to the same evaluation standard. ChatbotX, for example, is an open-source omnichannel platform, which means security teams can audit the codebase directly rather than relying solely on vendor attestations. For organizations running customer communications across WhatsApp, Messenger, Zalo, and WebChat, this transparency is a meaningful differentiator when assessing third-party data risk. The Cross-Platform Chatbots: The Definitive 2026 Guide for Unified Customer Experience explores how to structure cross-channel deployments with data governance built in from the start.

Warning: Automation without governance amplifies mistakes at machine speed. Always embed human approval gates for destructive or high-impact actions such as mass account disabling or bulk data deletion.

Measurement, Audits, and Continuous Improvement

The Core Metrics Dashboard

Security posture becomes boardroom-legible when tracked with quantifiable KPIs that show trends over time – not just point-in-time compliance status.

| Metric | Formula | Target Benchmark |

|---|---|---|

| Mean Time to Detect (MTTD) | Time from compromise to alert | Under 30 minutes for Tier-1 assets |

| Mean Time to Respond (MTTR) | Time from alert to containment | Under 2 hours for critical events |

| Privileged Access Coverage | Accounts with adaptive MFA / total privileged accounts | 100% |

| Data Classification Accuracy | Classified records / total records in catalog | Above 95% |

| Patch Lag | Days between vendor CVE release and deployment | Fewer than 7 days for critical CVEs |

Compare KPIs across business units so lagging teams receive targeted coaching and automation support rather than generic remediation mandates.

Penetration Testing and Purple Team Exercises

Automated scanning finds known vulnerability patterns. Human creativity finds the edge cases and logic flaws that scanners miss. A mature testing program combines both.

Schedule annual external penetration tests plus quarterly internal purple team exercises where red and blue teams co-design detection use cases. Route all findings to governance committees that can prioritize remediation funding based on actual business impact – not just CVSS scores.

Rotate third-party testing firms every 18 months to ensure fresh methodology and eliminate familiarity blind spots.

AI-Assisted Continuous Controls Monitoring

Modern telemetry volumes exceed human-scale analysis capacity. Deploy UEBA, ML-driven anomaly detection, and automated policy verification to forecast control drift before it becomes a breach pathway.

Feed AI detection models with sanitized, labeled datasets that comply with your own data governance standards – the same standards you would apply to any third-party vendor. Pair all AI detections with human validation checkpoints to prevent false positives from desensitizing analysts to real signals.

Emerging continuous controls monitoring platforms can stream compliance evidence directly into audit portals, cutting annual audit preparation time by up to 50 percent and maintaining continuous regulator trust between formal reviews.

For organizations exploring how AI integrations – including chatbots and automated workflows – affect their data governance posture, 13 Ways AI Improves Customer Experience in 2026 addresses how AI deployments can be structured to balance capability with responsible data handling.

Frequently Asked Questions

How often should organizations reassess their data security best practices?

Conduct a lightweight review every quarter and a comprehensive overhaul annually, or immediately following major architectural changes, acquisitions, or significant regulatory updates. The threat landscape and your own infrastructure both shift continuously – security programs that review controls only at fixed intervals accumulate undetected drift.

What is the most cost-effective starting point for building data security best practices?

Identity hardening paired with data classification delivers the fastest ROI for most organizations. Locking down privileged accounts, enforcing phishing-resistant MFA, and labeling data by sensitivity tier makes every downstream control more targeted and economical – because you know what you are protecting and who should have access.

How do data security best practices apply to AI tools and machine learning initiatives?

Govern training data carefully, enforce strict retention limits, and isolate models in secured, auditable environments. Apply the same vendor assessment criteria to AI platforms that you would apply to any third-party system that handles sensitive data – including reviewing data residency, access logging, and breach notification procedures. The What Is an AI Chatbot? Benefits and How to Deploy One Effectively for Your Business guide covers data handling considerations specific to conversational AI deployments.

Which metrics communicate security program value most effectively to executives?

Executives respond to blended metrics that connect security investment to business outcomes: MTTD and MTTR as operational efficiency indicators, classification accuracy as a data governance signal, and cost-of-incident-avoidance as a financial framing. Present trendlines with brief narratives showing how specific investments reduced measurable exposure or accelerated a compliance milestone.

Do small organizations need formal data security frameworks?

Yes – scaled-down frameworks provide essential clarity without overwhelming limited resources. Even early-stage companies can define data owners, enforce MFA on critical systems, restrict third-party access, and schedule basic penetration tests. Starting with these foundational controls ensures that growth does not outpace governance, which is when the most costly and reputationally damaging breaches tend to occur.

Build a Security Foundation Your Organization Can Trust

Data security best practices are not a project with a completion date. They are a continuous operating model – one that evolves alongside the threat landscape, your technology stack, and the regulatory environment.

The organizations that build genuine resilience in 2026 share a common pattern: they treat security as a business capability, not an IT function. They invest in governance before tools, classify data before encrypting it, and choose vendors based on demonstrated transparency rather than marketing claims.

Every customer conversation your business conducts – across WhatsApp, Messenger, Zalo, or live chat – represents a data flow that deserves the same security scrutiny as your internal systems. ChatbotX is an open-source omnichannel AI platform built with that principle in mind. Because the codebase is publicly auditable on GitHub, your security team can verify data handling behavior directly – not take it on faith. Combine that transparency with enterprise-grade features like role-based access, unified inbox controls, and AI Agents that handle customer interactions without exposing backend data, and you have a messaging infrastructure that complements rather than undermines your security posture.

Start a free 7-day trial on ChatbotX → No credit card required. Full platform access from day one.